In today's video communication domain, frame synchronization technology is gaining significant attention. This article delves into the importance, implementation methods, and the impact of remote IP broadcasting on video live streaming technology.

1. Definition of Frame Synchronization

In the broadcasting industry, frame synchronization ensures that audio and video signals transmitted through different paths can be played synchronously at the receiving end based on the same timeline. Its purpose is to eliminate time discrepancies caused by different signal transmission paths, ensuring collaborative work and synchronized playback among various signal sources in broadcasting or video production.

Specifically, the definition of frame synchronization includes the following key aspects:Time Synchronization: Frame synchronization ensures that various signal sources maintain consistency on the timeline at the receiving end, preventing audio-visual desynchronization issues during mixing or switching between different signal sources.Frame Synchronization: In addition to time synchronization, frame synchronization also ensures that the frame rates of various signal sources are consistent, ensuring smooth video playback and avoiding issues such as frame jumps or stuttering due to different frame rates.Collaboration of Signal Sources: One of the goals of frame synchronization is to enable different signal sources (cameras, audio devices, etc.) to collaborate during playback, maintaining a unified time reference. This is crucial for real-time broadcasting, television production, and other fields.Addressing Network Latency: In the broadcasting industry, frame synchronization technology also considers the latency introduced by signal transmission over the network. By appropriately processing received signals, frame synchronization helps minimize synchronization issues caused by network delays.

In summary, frame synchronization is a key technology in the broadcasting industry, ensuring collaborative work and synchronized playback of different signal sources, providing viewers with a high-quality and smooth audio-visual experience.

2. Why Frame Synchronization is Necessary?

2.1 Challenges of Video Return TransmissionDuring the process of transmitting video signals back through IP networks, each video signal and its corresponding network are independent. Due to factors such as network link quality, latency, jitter, and packet loss, the arrival times of video signals from different camera positions to the backend receiver is inconsistent, leading to frame synchronization issues.

2.2 Examples of Application ScenariosSports Event Broadcasting: In events like marathons, where cameras need to follow moving participants, it's challenging to maintain a consistent network connection. This inconsistency in the arrival time of video signals can affect the viewer experience. Rocket Launch Broadcasting: Broadcasting rocket launches involves multiple camera positions capturing from different angles and distances. Lack of synchronization can result in discrepancies where one camera shows ignition while another is still waiting, impacting the overall viewing experience.

Security Surveillance: In control rooms displaying multiple video signals on a large screen, synchronously transmitting multiple video signals at the same time is essential for a clear understanding of the on-site situation. During playback, multiple video streams must also be synchronized; otherwise, analyzing interactions between different video streams at the same time is not possible.

2.3 Importance of Command and DispatchIn scenarios such as firefighting and rescue, real-time traffic conditions, disaster scenes, etc., accurate analysis and handling of the situation require precise synchronization of multiple screens. Incorrect judgments due to asynchronous footage can lead to severe losses.

3. Introduction to Frame Synchronization Technology

3.1 Principles of Network Video Return Frame SynchronizationThe core principle of network video return frame synchronization lies in the precise time calibration at the front-end transmission end and the clever use of SEI (Supplemental Enhancement Information) in the video encoding protocols like H.264 or H.265. Initially, network time is obtained through methods like NTP, and this time information along with the video frame number is ingeniously embedded into the SEI information of the encoding protocol. This way, each frame carries essential information such as timestamps and frame numbers.This information-rich SEI packet is then transmitted over the network to the backend receiving end, where it undergoes decoding processing. By accurately interpreting the timestamps and frame numbers in these SEI packets, the receiving end achieves synchronized operation for multiple returned video signals. Specifically , by ensuring alignment of various video streams at the same time and frame number, the receiving end can send these video signals to the output port in a unified manner. This accomplishes the goal of complete synchronization after each camera position receives the output.It is worth noting that commonly used frame synchronization solutions in the market involve a combination of multiple live broadcast backpacks and servers. However, these solutions have some limitations, including the requirement for configuring public IP addresses, the fixed nature of servers, and relatively high costs. This can result in inconvenience and cost pressures in practical applications.

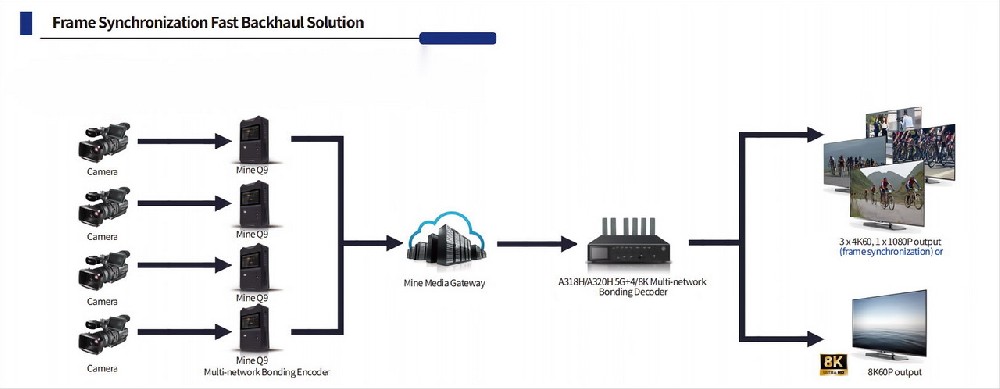

3.2 MINEMedia Frame Synchronization OverviewThe MiNE Media frame synchronization solution consists of multiple Q9 live broadcast backpacks at the front end and 5g A318/A320H decoders. It supports simultaneous output of up to 16 frames in synchronization without the need for configuring public IP addresses. Based on MiNE Media media gateway technology, this solution not only achieves frame synchronization but also includes features such as multi-network aggregation, multi-platform streaming from the front end, low-latency pulling, providing a comprehensive solution for video return.

4. Future Trends of Remote IP Broadcasting

4.1 Traditional Broadcasting Costs and ChallengesThose who frequently engage in live broadcasting are well aware of the complexities involved. After receiving a live broadcast task, one needs to arrange various equipment and personnel, transport the equipment to the location, which can involve long-distance travel. This process incurs costs such as equipment transportation fees, personnel travel expenses for accommodation and transportation, equipment transportation risks, and more.

If remote IP broadcasting can be realized, where video signals from various camera positions are transmitted back through the network via streaming devices, then only a cameraperson and an on-site coordinator or director would be needed for an event. The director, packaging, post-production personnel, and related equipment can be located in a studio or company, significantly reducing costs and improving efficiency.

Remote IP production primarily requires the video signal to be transmitted back, and synchronized output of the returned video signal is a basic requirement. As long as the video returned from various camera positions can be frame-synchronized, the production effect is basically the same as on-site production, with the only difference being a slight delay in broadcasting compared to on-site production.

4.2 Implementation Solutions for Remote IP BroadcastingTaking the example of MiNE Q9 + A318 frame synchronization return, this solution is explained through a successful practical case, including its application in the "China Leisure Sports Challenge." Emphasis is placed on the use of A318H's 5G aggregation technology, eliminating the need for configuring public IP addresses on the broadcasting vehicle and making the return more convenient and efficient.

5. Case Sharing

Mine Q9 + A318 Frame Synchronization Return First Practical Deployment (Featuring Inclusion of Five CCTV Sports Channels) On September 17, 2023, the "Green Waters and Blue Mountains" China Leisure Sports

Challenge (Yixing Station) commenced.

The Mine Q9 front end provides real-time video feedback with built-in 5G multi-network aggregation for flexible on-the-go deployment.

Paired with the Mine A318H, equipped with built-in 5G aggregation, it seamlessly integrates with mobile vehicles without the need for a separate public IP configuration. This eliminates the requirement for a fixed IP on the broadcasting vehicle, making the feedback process more convenient and efficient.

On September 17, 2023, the "Green Waters and Blue Mountains" China Leisure Sports

Challenge (Yixing Station) commenced.

The Mine Q9 front end provides real-time video feedback with built-in 5G multi-network aggregation for flexible on-the-go deployment.

Paired with the Mine A318H, equipped with built-in 5G aggregation, it seamlessly integrates with mobile vehicles without the need for a separate public IP configuration. This eliminates the requirement for a fixed IP on the broadcasting vehicle, making the feedback process more convenient and efficient.

6. Introduction to More Frame Synchronization Solutions

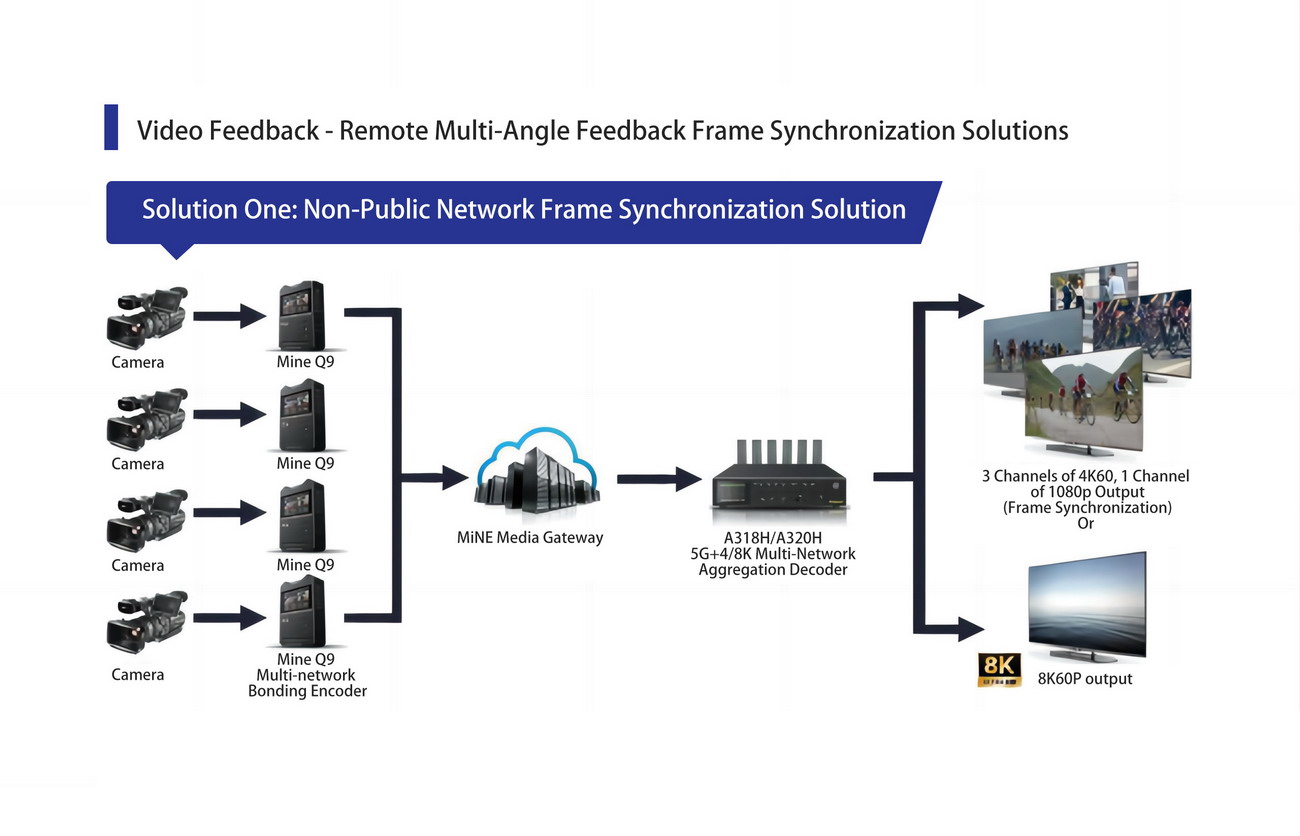

Video Feedback - Remote Multi-Angle Feedback Frame Synchronization Solutions

Solution One: Non-Public Network Frame Synchronization Solution

Solution Features:

1. No need for a public network IP, easily deployable anytime, anywhere, suitable for vehicle use.

2. Supports multiple 4K feedbacks with frame synchronization output.

3. Multiple-angle frame synchronization output with four independent HDMI output ports.

4. Both the transmitting and receiving ends support 5G network aggregation.Solution Two: Privatized Deployment Frame Synchronization Solution

Solution Features:

1. Exclusive service with backend monitoring.

2. Multiple-angle frame synchronization output with 4 or 8 channels of SDI output.

3. Requires provision of public network IP.

4. Devices are autonomously managed.

Emergency Video Feedback Solutions

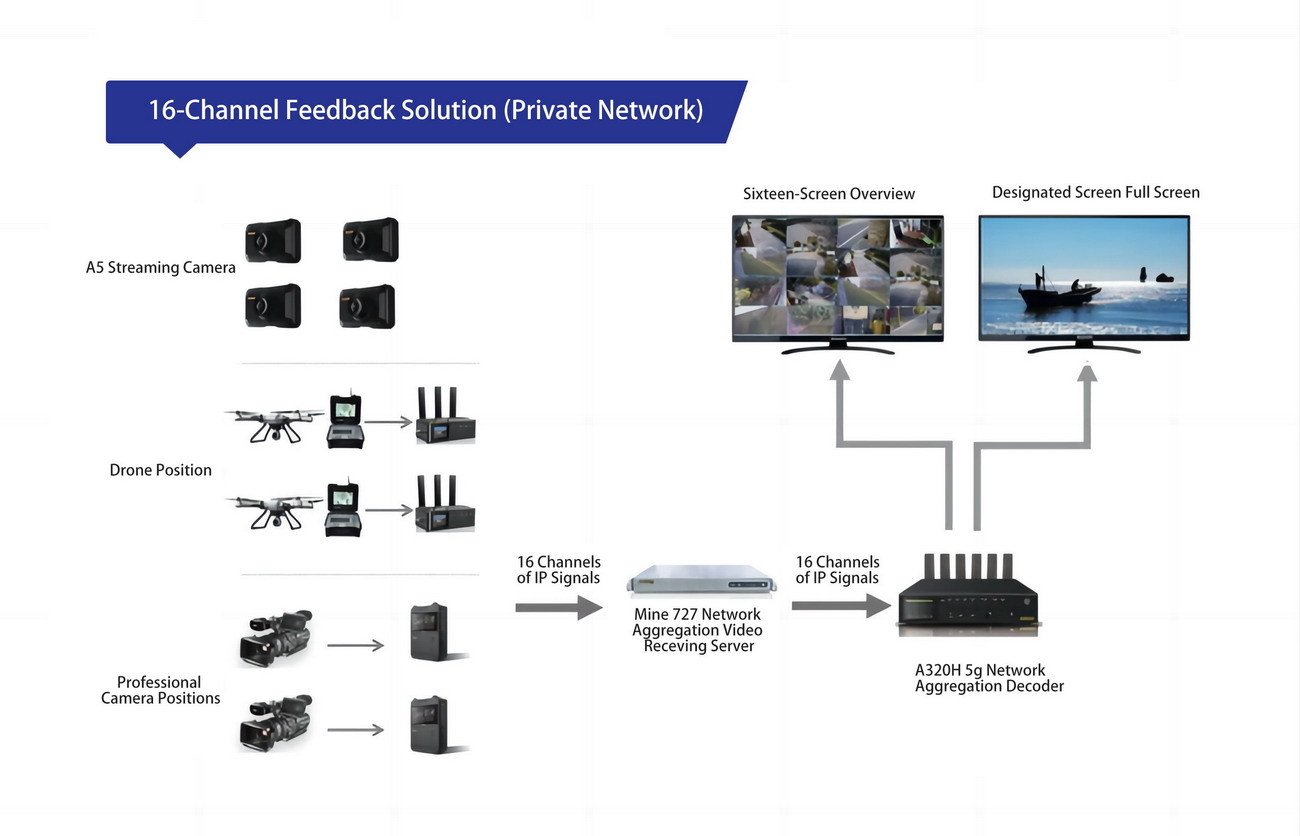

1. Solution One: 16-Channel Feedback Solution (Public Network)

2. Solution Two: 16-Channel Feedback Solution (Private Network)

Pay attention to us, MiNEMedia will show you the most advanced video codec and network transmission technology.